Have you ever asked a chatbot a simple question, only to get an answer that’s outdated or just plain wrong? Luckily, RAG use cases demonstrate that even the flawed LLM assistant can maximize their power and turn complex knowledge into responses you can actually trust.

The problem is that language models, no matter how advanced, aren’t designed to pull facts on demand. They generate text based on patterns learned from training materials, which often come from public sources and can quickly become outdated. Unfortunately, that means relying on them as-is can lead to mistakes, misinformation, and frustrated customers or employees.

When you bring these tools into a business setting, such as for customer support, internal assistants, or sales enablement, generic answers aren't enough. You might need a system that can reference your company’s policies, manuals, and internal documents to produce responses that are accurate, timely, and aligned with your business.

In this article, we’ll explore the best agentic RAG use cases that businesses are applying, showing how it can improve customer experiences, speed up decision-making, and help teams access the right information exactly when they need it.

Retrieval-augmented generation, or RAG, is a method that helps improve the answers a large language model (LLM) produces by connecting it to external sources of information. Instead of relying solely on what the model learned during training, RAG allows it to pull in up-to-date and relevant context, reducing errors and improving the overall quality of its responses.

Large language models are incredibly powerful, but they often operate as a “black box.” They detect patterns between words extremely well, yet they can struggle with meaning and context. Without additional guidance, this can lead to mistakes or fabricated information, commonly called hallucinations.

RAG addresses this limitation by integrating real-time knowledge and domain-specific expertise. Using APIs, databases, or other reference materials, the model can consult authoritative sources before generating an answer. Essentially, it works as if giving AI an open book to reference instead of forcing it to rely only on memory.

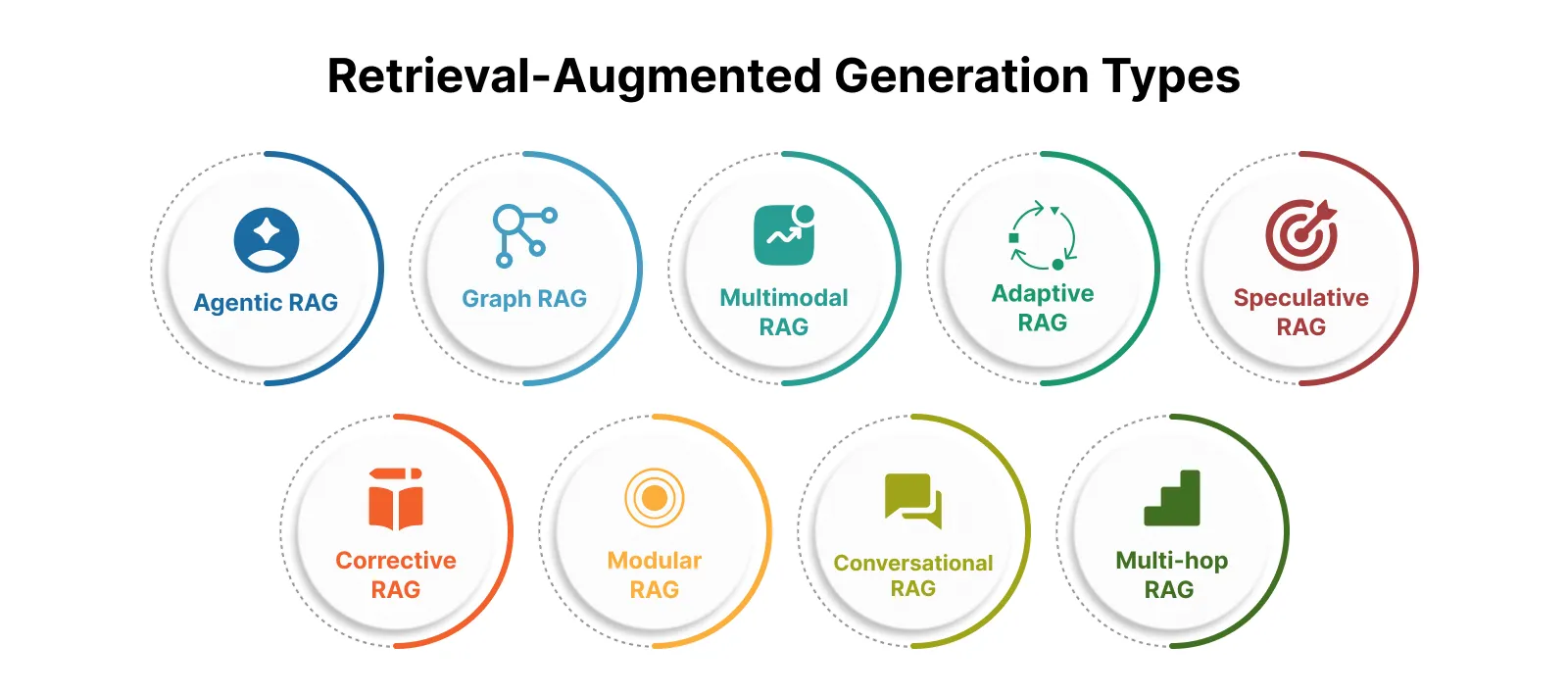

Retrieval-augmented generation comes in many forms, and the version you choose can truly shape how well it works for you. Different architectures solve different problems, so understanding the options becomes a strategic decision rather than just a technical detail.

Take DoorDash as one of the RAG use case examples. The company implemented a RAG-powered system to support its delivery drivers. Instead of sending generic replies, the system retrieves relevant insights from previous cases and internal documentation before generating an answer. Drivers receive faster and more accurate support, which reduces friction, speeds up issue resolution, and improves the overall experience.

Such retrieval-augmented generation examples show how it can drive measurable operational impact and strengthen day-to-day performance. Choosing the right type can make a big difference in how effectively your business leverages information.

Asking a digital assistant a question and receiving an answer that is accurate, up-to-date, and grounded in real data is becoming increasingly important, especially in fields like healthcare. AI RAG agents assist healthcare professionals by allowing language models to go beyond their original training and pull relevant information from trusted sources, delivering responses that are precise and context-aware.

Instead of relying only on static training data, it searches articles, databases, and internal company materials before generating an answer. As a result, you can get current and actionable insights without retraining the model every time information changes. Research confirms the impact, with RAG-enabled systems reaching up to 94% correctness in clinical testing compared to significantly lower accuracy from standalone models.

Now let’s take a look at the most common RAG AI use cases and where it delivers the greatest value.

Enterprise RAG use cases make customer support faster, easier, and more satisfying for both your team and customers. Virtual assistants get instant access to internal documents, ticket histories, and FAQs, so they can answer questions with real context instead of generic scripts. Customers get faster, more accurate responses, and your support team finally gets a breather.

Multilingual support takes the advantage even further. When a customer reports a bug, the assistant instantly pulls the latest notes from engineering or the internal wiki and provides the current status. It can generate responses in any language, making global support much easier. No more digging through documents or waiting for updates, only clear and accurate answers every time.

Finding a document in a large company can feel impossible. GenAI RAG use cases search cuts through the chaos, letting you ask questions in plain language and get clear answers pulled from multiple systems such as cloud storage, CRMs, and knowledge bases.

The real magic happens when it connects the dots. Instead of just matching keywords, it understands what you’re really asking and combines information from different sources into a complete picture. Employees stop wasting half their day hunting for files, and decisions get made faster.

LLM RAG use cases finally give legal teams a tool that actually understands contracts. Ask about clauses or obligations, and get clear, sourced answers in minutes instead of days. It flags risks, spots unusual terms, and compares contracts against company policies automatically.

It also bridges gaps between teams. Legal can cross-check with procurement, finance, or HR instantly, reducing back-and-forth emails and keeping everyone on the same page.

Custom software development changes the game for finance teams. Analysts can ask questions in plain English and instantly access information from internal systems, market feeds, and financial reports. Tasks that once took hours of juggling spreadsheets and dashboards now happen in minutes.

Internal teams gain advantages as well. Budget planning, forecasting, and compliance checks are automated, allowing teams to focus on analysis instead of sifting through files.

New hires no longer need to drown in PDFs or pester HR for answers. Agentic AI chats naturally, pulling verified information from HR documents and onboarding guides. Employees get guidance, HR stops repeating themselves, and onboarding becomes smooth and consistent worldwide.

The use case of RAG transforms IT support from endless back-and-forth into fast, accurate problem-solving. Assistants understand internal docs, past tickets, and system logs, giving users real solutions instead of generic troubleshooting steps.

They even learn patterns over time, suggesting fixes before issues escalate, and integrate with monitoring tools to prevent problems before users notice them.

Keeping documentation accurate is always time-consuming. RAG for business generates and updates manuals, specs, and guides directly from your codebase, version histories, and support conversations. Teams always have the most current information without needing to constantly manage documentation.

Product managers, developers, and support staff all work from the same source of truth, improving alignment and cutting down on delays.

Innovative cloud-based healthcare solutions help compliance teams stay on top of constantly changing regulations. They scan company communications and internal records, flag risks, and generate audit-ready summaries automatically.

When rules shift or audits loom, teams stay ahead of the curve, reducing stress and preventing costly mistakes.

Retrieval augmented generation retail use cases in retail transform RFP chaos into a smooth, organized process. It gathers accurate information from all sales materials to generate fast, consistent responses for proposals and RFIs. Sales reps can answer technical or competitive questions instantly without searching for details.

Teams stay aligned across locations, reducing errors and ensuring outdated information doesn’t slow down deals.

Finally, RAG transforms weeks of research into seconds. Agentic reasoning pulls from scientific papers, market reports, and internal libraries to provide contextualized answers with sources included. Teams can spot trends, gaps, and conflicts quickly, making research faster and more reliable.

It even tracks trends over time, suggesting new directions and highlighting emerging insights.

You have probably experienced at least the frustration of relying on answers that are outdated, vague, or simply wrong. When teams or customers cannot access accurate information quickly, productivity drops, mistakes increase, and trust erodes. Agentic RAG for business intelligence changes that dynamic, giving your tools access to current, trusted, and context-specific information, transforming slow and error-prone responses into fast and reliable ones.

In a clinical assessment across 14 scenarios, a RAG-enabled model achieved 96.4% accuracy, outperforming expert human responses, which reached 86.6%. Results like these show how powerful retrieval-backed systems can be when precision truly matters.

Besides, the value goes well beyond improving accuracy. It helps save time, lower operational costs, and enable teams to make smarter decisions every day, keeping your business ahead of the competition.

Language models are powerful, but they rely on pre-set training material that can quickly become outdated or miss company-specific details. Without high-quality cloud application development and access to trusted sources, answers can be inconsistent or, worse, completely made up. Connecting the model to authoritative information keeps responses accurate, reliable, and aligned with what actually matters for your business.

Knowing where an answer comes from makes all the difference. RAG LLM use cases that reference specific sources give your team transparency and confidence, showing exactly which information shaped the response. Stakeholders can rely on the output because it’s grounded in verifiable material.

Industries move fast, and yesterday’s information can quickly lose relevance. Pulling in live updates from news, financial reports, social platforms, or internal systems ensures answers stay up to date. Teams save time and money since there’s no need for constant retraining or expensive updates.

Developers can create tools tailored to your business needs while keeping sensitive information secure. Access to private data can be tightly managed, ensuring only authorized users see it. This makes it possible to deliver smart, reliable responses without compromising safety or compliance.

Outdated answers can waste time, create confusion, and even damage credibility. The system taps into the latest external sources to deliver responses that reflect what’s happening right now. Recent product updates, market trends, and new company policies are all included, giving your teams and customers information that is accurate, relevant, and ready to act on.

Mistakes happen when a model tries to fill in the gaps without real context. When RAG enterprise use cases ground responses in trusted sources, the risk of incorrect or fabricated answers drops dramatically. Even better, outputs can include references, so your team or clients can quickly verify the information if needed. Accuracy becomes the standard, not the exception, giving everyone confidence in the results.

Generic answers just don’t cut it when your business operates in a specialized field. With access to your own proprietary or domain-specific knowledge, the system provides responses that truly reflect your organization’s context. Teams get answers tailored to their needs, clients receive informed guidance, and business decisions are backed by knowledge that actually matters.

Customizing models used to require expensive retraining or complex engineering work. Now, teams can implement this approach quickly, without massive technical overhead, while still keeping responses up to date. Updates happen seamlessly, so your business stays agile, your knowledge stays fresh, and your budget stays intact. Efficiency and savings go hand in hand, letting your team focus on higher-value work instead of maintaining technology.

Use cases for RAG can transform how your business uses information, but rolling it out at scale isn’t without its hurdles. Even the most advanced systems can stumble if the setup isn’t carefully planned.

Even the smartest models can produce poor answers if they pull in irrelevant or low-quality sources. It’s essential to build a retrieval pipeline that consistently delivers the right content. Thus, choosing the best embedding models, defining similarity measures carefully, and ranking results effectively so the model can focus on what truly matters.

Feeding too much content into the use cases of RAG can overwhelm it, dilute important details, or even truncate key information. Smart chunking strategies help balance context with efficiency, ensuring the model can process information without losing focus or coherence.

The power of retrieval-augmented generation comes from its access to up-to-date knowledge. Without automated updates or regular ingestion of new content, indexes can quickly go stale, leading to outdated responses or even hallucinations. Maintaining current information is critical to preserving accuracy and trustworthiness.

Working with large datasets or external APIs can introduce delays in retrieving, ranking, and generating responses. Managing latency ensures users get answers quickly, keeping workflows smooth and avoiding frustration from slow responses.

Traditional evaluation methods don’t always capture the quality of hybrid systems like retrieval-augmented generation. Assessing output accuracy requires a mix of human judgment, relevance scoring, and groundedness checks to make sure the model’s responses are accurate, coherent, and actionable.

The promise of retrieval-augmented generation is enormous, and its impact on enterprise applications is already becoming clear. Its real strength lies in improving reliability and effectiveness, helping your venture reduce errors and make decisions with greater confidence.

Companies are already putting it to work. Lumen Technologies, as a retrieval-augmented generation example, implemented an internal knowledge assistant that allows employees to quickly retrieve accurate information from vast technical documentation. Instead of manually searching through files, your employees receive precise, contextual answers in seconds, which improves productivity and reduces operational mistakes.

Connecting generative systems to real-time information keeps outputs grounded in relevant and trustworthy sources. Healthcare and finance teams benefit from faster insights and stronger data accuracy. Implementation requires solid data infrastructure, efficient retrieval pipelines, and continuous optimization, but the long-term value is clear.

As the technology evolves, RAG in business will process larger volumes of data, respond faster, and support even more complex use cases. The ultimate goal remains the same: to create generative tools that are smarter, more dependable, and ready for real-world business demands.

Our newsletter is packed with valuable insights, exclusive offers, and helpful resources that can help you grow your business and achieve your goals.